|

|

Multiple Start Search Paths and Root Aliases in Crawler

Our technical SEO tool supports mixing multiple domains and additional start scan paths.

Websites with Domain Mirrors or Multiple Root Path Urls

While usually not recommended because of

duplicate content issues,

some websites mix domains, links and www and non-www usage in URLs.

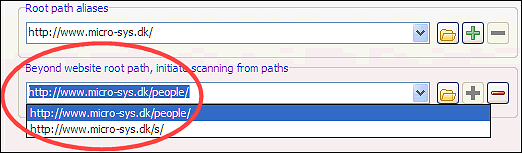

In such cases, after configuring the site root to be scanned, which is usually the primary domain, make a list af root aliases.

If you intend to create XML sitemaps, please check this page about domains in XML sitemaps.

Note: You need to use the [+] button to add a root path alias into the dropdown list.

In Scan website | Crawler options you can configure the technical SEO tool to automatically add common root path aliases:

If you intend to create XML sitemaps, please check this page about domains in XML sitemaps.

Note: You need to use the [+] button to add a root path alias into the dropdown list.

In Scan website | Crawler options you can configure the technical SEO tool to automatically add common root path aliases:

The Website Root Path and How It Affects Crawling

If you use http://example.com/blogs/ as root, all paths

outside (excluding root path aliases)

such as e.g. http://example.com/forum/

will neither be included in output nor for analysis.

A better alternative might be to keep the website root as http://example.com/ followed by using analysis filters, output filters and additional start search paths (see below) to control your website crawl and resulting output.

Note: In this case you may need to uncheck option Scan website | Crawler options | Fix internal URLs if website root URL redirects to a different address.

A better alternative might be to keep the website root as http://example.com/ followed by using analysis filters, output filters and additional start search paths (see below) to control your website crawl and resulting output.

Note: In this case you may need to uncheck option Scan website | Crawler options | Fix internal URLs if website root URL redirects to a different address.

Scan Websites From Multiple Start Search Paths

Websites with site areas that have no

incoming links from within the rest of the website can sometimes cause a problem.

Remember that crosslinking hidden pages will not help if none of them are linked from anywhere else in the website.

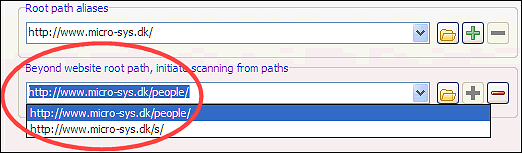

This problem can easily be overcome in our technical SEO software. It is possible to start a website scan from multiple paths in addition to website directory root.

In newer versions, there are also buttons for quickly adding additional start search from addresses by:

Note: You need to use the [+] button to add additional start scan paths into the dropdown list.

Note: It is often better to make sure your website is cross linked, so crawlers can find all pages on their own.

Remember that crosslinking hidden pages will not help if none of them are linked from anywhere else in the website.

This problem can easily be overcome in our technical SEO software. It is possible to start a website scan from multiple paths in addition to website directory root.

In newer versions, there are also buttons for quickly adding additional start search from addresses by:

- importing list of URLs from search engines.

- importing list of URLs from a file.

- importing list of URLs from a website page URL.

- addding common urls such as typical xml sitemap paths.

Note: You need to use the [+] button to add additional start scan paths into the dropdown list.

Note: It is often better to make sure your website is cross linked, so crawlers can find all pages on their own.